I have been wanting to learn about kubernetes k8s since long, and create this blog series. Here we are finally started (thanks to

null cloud security study group

), so without wasting too much time let’s get started. I am learning this having a security mindset, to find common misconfigurations and understand the development process to understand the mitigation.

K8s is a container orchestrator. Before diving too much into the depth let’s see what orchestrators/orchestrations are.

Orchestration and it’s Need

Orchestration is the automated configuration, management, and coordination of computer systems, applications, and services. Orchestration helps IT to more easily manage complex tasks and workflows.

Source: RedHat

In our case, orchestration entails running and managing containers across a group of machines (physical/virtual) that are linked via a network.

The majority of modern applications are built in a distributed manner, which necessitates hosting different components on different machines that are linked together to work as a single system (often referred to as microservice). Now, the problem with this kind of infrastructure is managing all these different components together and getting them to work together. Because we now have microservices to deal with, each of these services requires resources, monitoring, and troubleshooting if a problem arises. While it may be possible to do all of those things manually for a single service, when dealing with multiple services, each with its own container, you’ll need automated processes.

Enters Kubernetes (or any container orchestrator, but we are going to talk about k8s as it is de-facto) If interested, in knowing what all stuff is happening in space for orchestration - refer CNCF Landscape .

CNCF K8S Card

In k8s, each collection of containers is referred to as a cluster, and each cluster can be treated as a computer that focuses on what the environment should look like based on the user’s specifications. Technically speaking, k8s compares the current state of containers within a cluster to the desired state based on the specifications. If the desired state is not found, k8s will create or destroy objects to match up.

Kubernetes Components

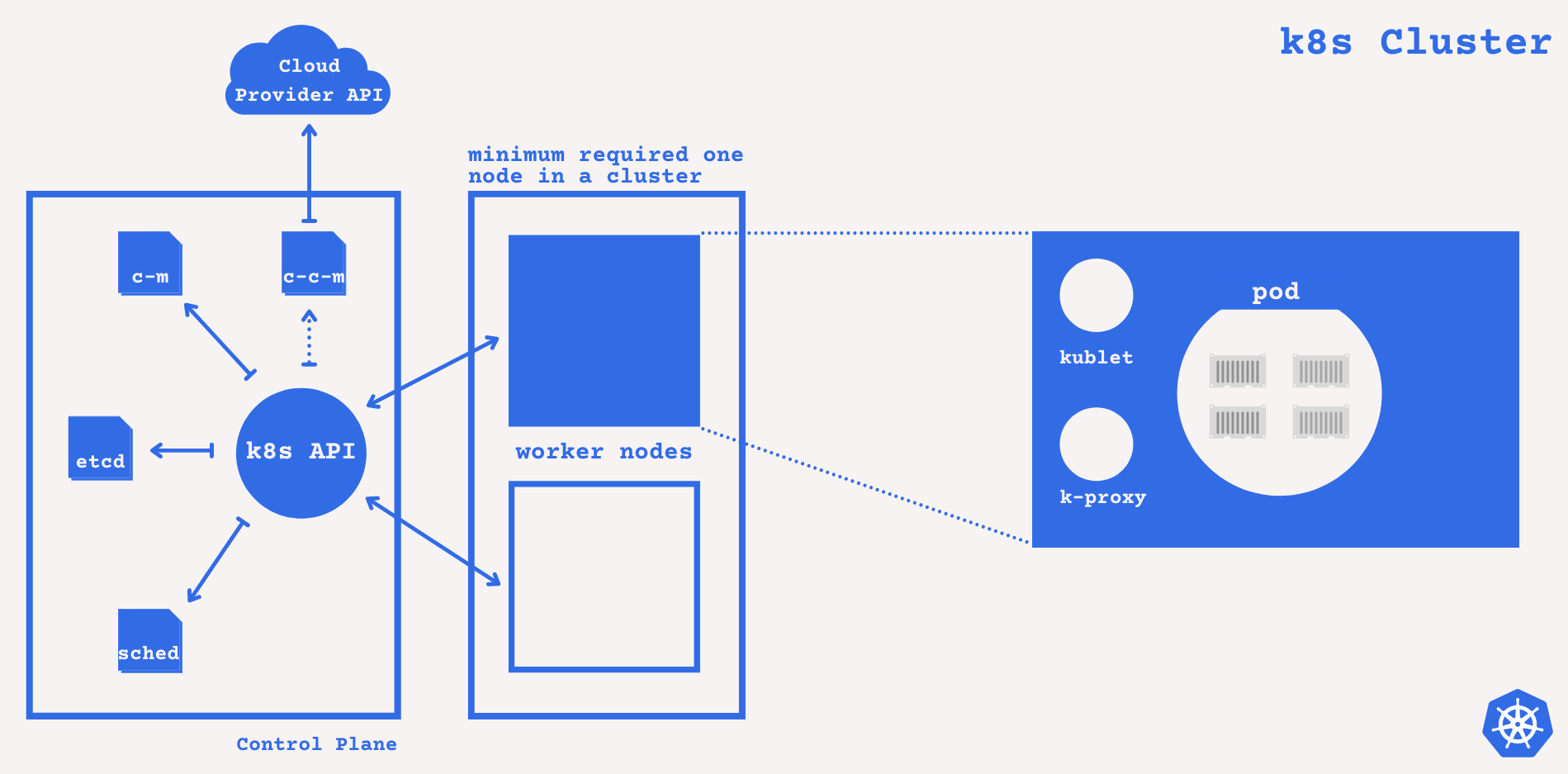

k8s Components

Everything inside a cluster is being managed by the Control Plane, it takes global decisions about the cluster, for example - scheduling, detecting and responding to cluster events, so it makes sense to look into different components of the control plane first.

A control plane can reside inside any machine in a cluster and can also be setup in a separate machine (not with the worker nodes) for high availability and fault tolerance.

Control Plane (Master Node) Components

-

kube-apiserver - It makes the Kubernetes API available and acts as frontend for k8s control plane.

kube-apiserveris intended to scale horizontally, i.e. by deploying more instances. You can run multiple instances ofkube-apiserverand balance traffic between them. -

etcd - Kubernetes' backing store for all cluster data is a consistent and highly available key value store. If your Kubernetes cluster relies on etcd as its backing store, make sure you have a backup strategy in place, in case of failure.

-

kube-scheduler - Depending upon the specifications, scheduling decisions are made. This components watches pods with no assigned node and assigns them with one.

-

kube-controller-manager - It runs controller processes (control loop that watches shared state of the cluster and make amends to achieve the desired state). There are different types of controllers -

- Node Controller - noticing & responding when node goes down.

- Job Controller - watches for job objects that represents tasks, and creates pod for the tasks to run.

- Endpoint Controller - joins services & pods.

- Service Account & Token Controller - create default account & API access token for new namespaces.

-

cloud-controller-manager - similar to

kube-controller-managerbut cloud service provider specific. It embed cloud specific control logic.There are a few controllers that can be cloud dependent -- Node Controller - to check with the cloud provider to see if a node in the cloud has been deleted after it stops responding.

- Route Controller - setting up routes in underlying cloud infra.

- Service Controller - creating, updating and deleting cloud provider load balancer.

Now, that all for the control plane components, let move ahead to see what are the node components that this control place talks to.

Worker Node Components

Every node has node components that keep pods running and provide the Kubernetes runtime environment.

- kubelet - It is k8s specific. It’s an agent that runs on each node in the cluster. It makes sure that containers are running in a Pod based on

PodSpec. - kube-proxy - kube-proxy is a network proxy that runs on each node in your cluster and implements a component of the Kubernetes Service concept. If the operating system has a packet filtering layer and it is available, kube-proxy will use it. Otherwise, the traffic is forwarded by kube-proxy.

- Container Runtime - The container runtime (cri-o, docker, etc.) is the software that is responsible for running containers. [ To learn more click here ]

- Pod - A pod is a group of one or more containers with shared storage and network resources, as well as a specification for how the containers should be run. A Pod’s shared context is a collection of Linux namespaces, cgroups, and potentially other isolation facets - the same things that isolate a Docker container. Individual applications may be further sub-isolated within the context of a Pod. k8s manages pod rather than containers. [ To learn more click here ]

Now, we have a basic idea of the k8s architecture. Let’s do some practical stuff and setup our own cluster and see how things are working. - Refer Setting Up My Own Cluster in k8s.