You don’t need money to buy expensive things, sometimes hard work pays off. And yes nullcon trainings are still expensive for me xD and I am grateful that I got this chance to attend one.

One year ago, I was going through the nullcon training schedule, and trying to understand the structure, and how much I can learn from it, because it was too expensive for me to get the actual training. Cut to March 1st , 2021, where I was attending a nullcon Training called – “ DEVSECOPS – AUTOMATING SECURITY IN DEVOPS by Rohit Salecha”, not only as an attendee but also as a moderator for the event, because I was the one of the employees at Payatu .

Let me start off the by giving a brief about myself, I had no freaking clue of DevSecOps…when Winja CTF Team (because of my contribution to the Winja CTF) offered me a training at nullcon this year, I was super hyped about this training, I was not sure if I was ready for this or not but I was excited. I had to be ready for this before the actual training starts, so I was searching for material but had no idea what to look for. At last, I went to my guruji – Anant Shrivastava , and he sent me a blog post/project by the mentor himself – https://www.rohitsalecha.com/project/practical_devops/ – which I took some time to go through and that helped me get started with DevOps. Now, I think I am ready for the training.

The course was lead by Rohit Salecha who is an associate director @ NotSoSecure , and have given several trainings at Blackhat, Nullcon, OWASP, etc. He has been involved in Information Security Domain since 10+ years. And during the training he was joined by two awesome people who helped all of us, if we faced some glitch or any help was required. Kudos to Abhijay and Jovin.

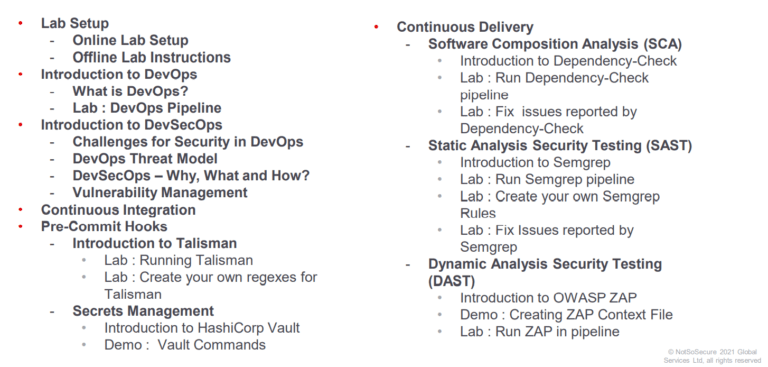

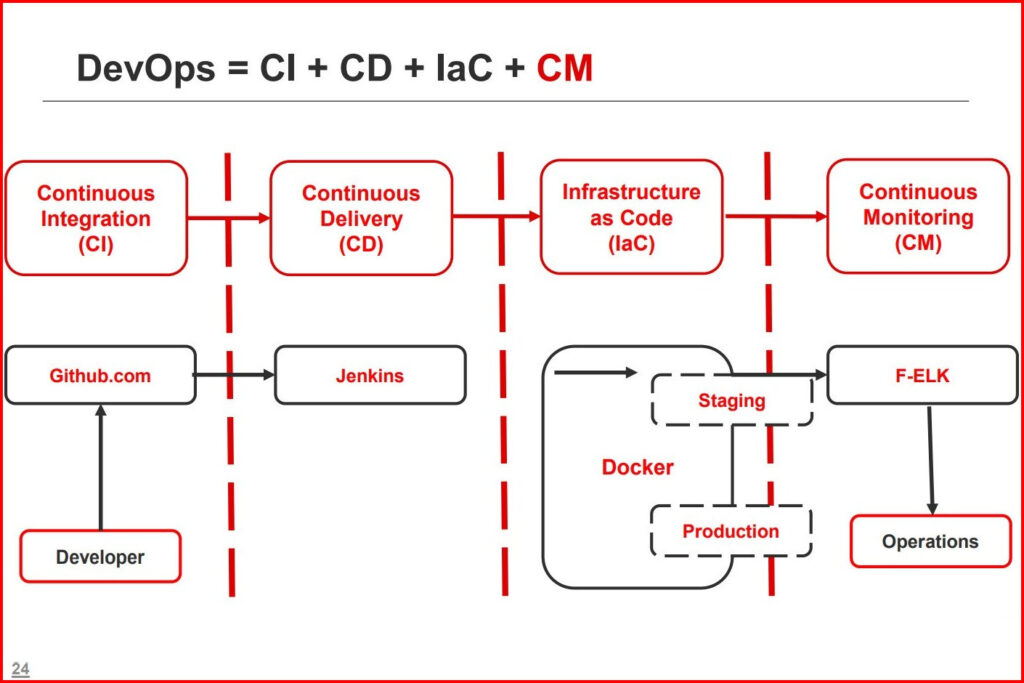

During the course of 4 days, we started off with the basics of DevOps and covered concepts like Continuous Integration (CI), Continuous Delivery (CD), Infrastructure As Code (IaC) and Continuous Monitoring (CM). Rohit also covered the workflow of setting up DevSecOps pipeline in AWS, followed by all the challenges and enablers of DevSecOps. In the process, we learnt and tried out lots of tools, such as Talisman, HashiCorp Vault, SemGrep, etc. to name a few. We have been given an online as well as an offline lab environment, for hands-on session and further practice. And looking at the codebase of the complete lab setup, it blows my mind. Kudos to notsosecure team for all the efforts.

Day 1

The session started with familiarizing us with the lab environment. And then we jumped straight into DevOps, for the starters, with a small demo about how DevOps pipeline works and functions.

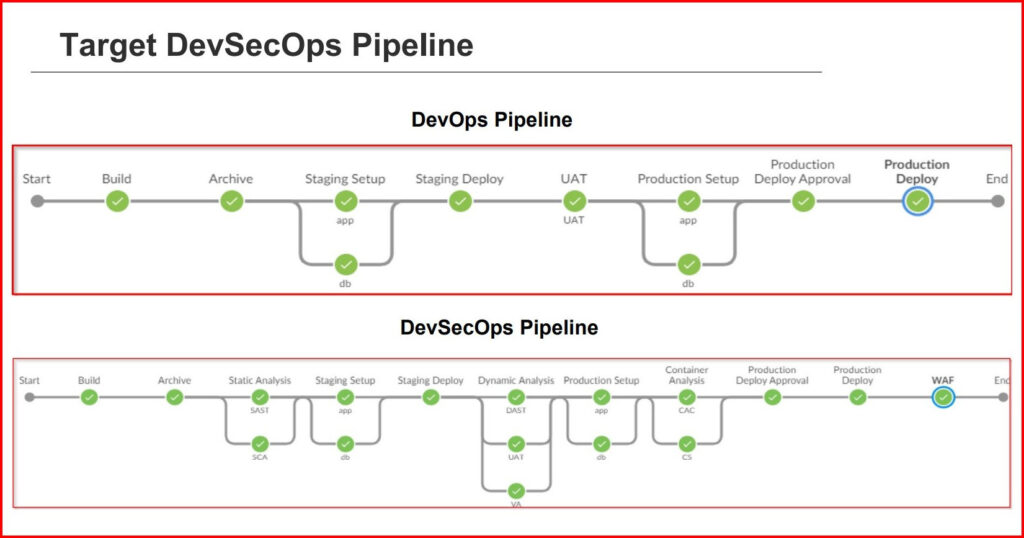

Then we were taught about the What, Why and How of DevSecOps, followed by the threat model. While comparing the two pipelines in parallel – DevOps and DevSecOps, we see significant increase in efficiency and security management.

For the last topic of Day 1, we covered Vulnerability Management and tinkering with tools like ArcherySec and setting up GitHub accounts.

Day 2

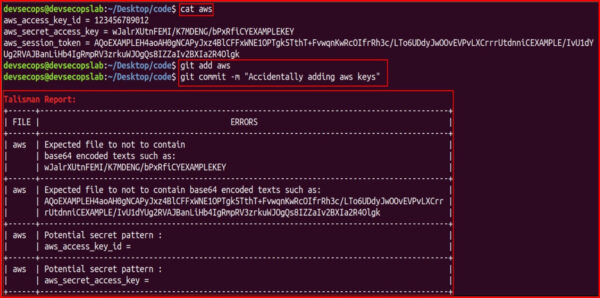

The six hours long session for day 2 covered CI and CD of the DevOps pipeline and how we can improve it by implementing security in it. We learnt about the pre-commit hooks and how to incorporate Talisman for inspecting the code for any leaked secrets and for managining secrets we look at the HashiCorp vault.

Talisman Snapshot

After covering topics of Continuous Integration(CI), we learnt about concepts revolving around Continuous Delivery (CD). We took care of the Software Composition Analysis and how it is important to look for any vulnerable components used in our software. The the lab environment based on Java and it’s struts framework, we tried out a tool called Dependency Checker, see it in action on how it compare the dependencies and the known vulnerabilities.

Moving forward, we looked at SAST or Static Analysis Security testing, also know as white-box automated testing. Beneficial for finding out low hanging fruits like SQLi, XSS, insecure libraries, etc. For this specific testing we looked at SemGrep that uses easy implementation of regular expressions to find vulnerable code, followed by DAST or Dynamic Analysis Security Testing aka black-box automated testing. For this we used OWASP ZAP, to run testcases on spidered HTTP requests and check for any specific vulnerabilities.

This brought us to the end of the Day 2 session.

Day 3

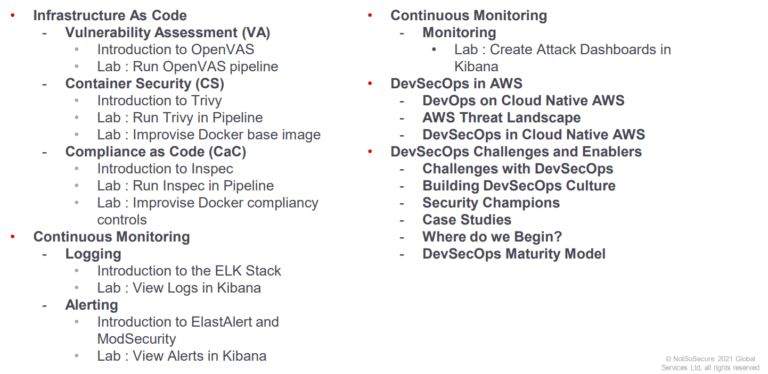

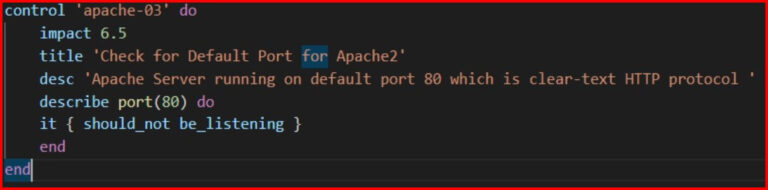

Day 3 started off with the topic of Infrastructure as Code (IaC), which covered sub topics such as Vulnerability Assessment (VA), Container Security (CS) and Compliance as Code (CaC).

We tried out OpenVAS for the Vulnerability Assessment, using which we can scan the infrastructure servers, network devices, IoT devices, etc. IaC allows to document, version control and audit the infrastructure. Docker/K8s infra relies on base images, and these base images needs to be minimal (less number of pre-installed packages) in order to avoid as many vulnerabilities as possible. In order to check the containers for vulnerabilities, we used Trivy. It checks for vulnerabilities in the installed packages in the base OS, updates and maintains a database of all the vulnerabilities from a set of sources.

Comparison between two containers base image. Slim base image having less vulnerabilities.

For me, this section of Compliance as Code is new, different and interesting. Compliance are industry standards or organization specific. It is essentially a set of rules and hence can be converted into written test cases.

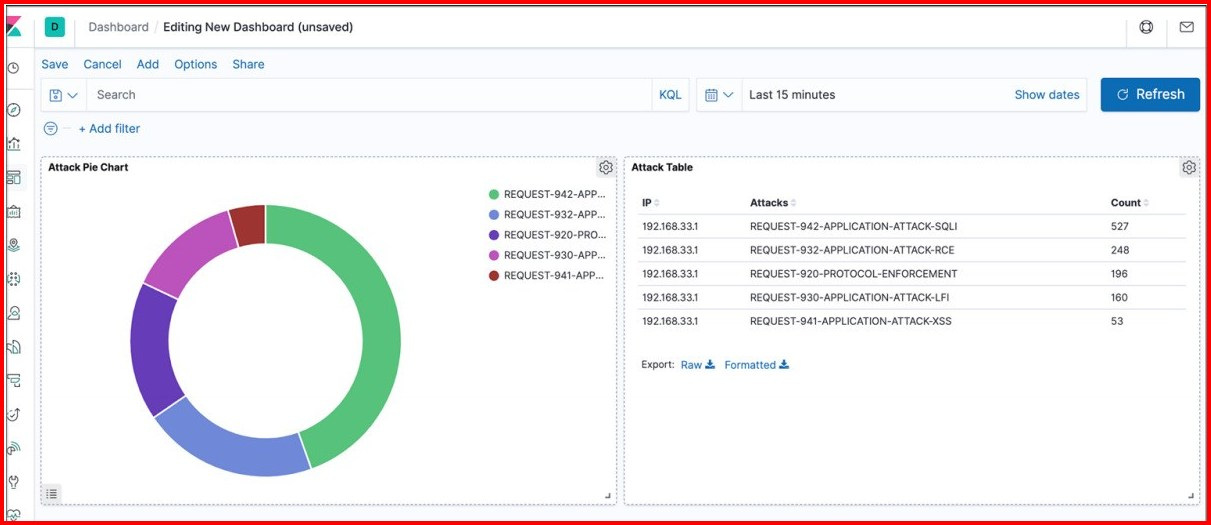

As all the controls are been put in place, we looked at the continuous monitoring section. It includes Logging, Monitoring and Alerting. We learnt how to setup ModSecurity WAF to clock attacks and the setup Kibana as a log monitoring system to analyze logs and create attack dashboards.

Kibana Dashboard

Day 4

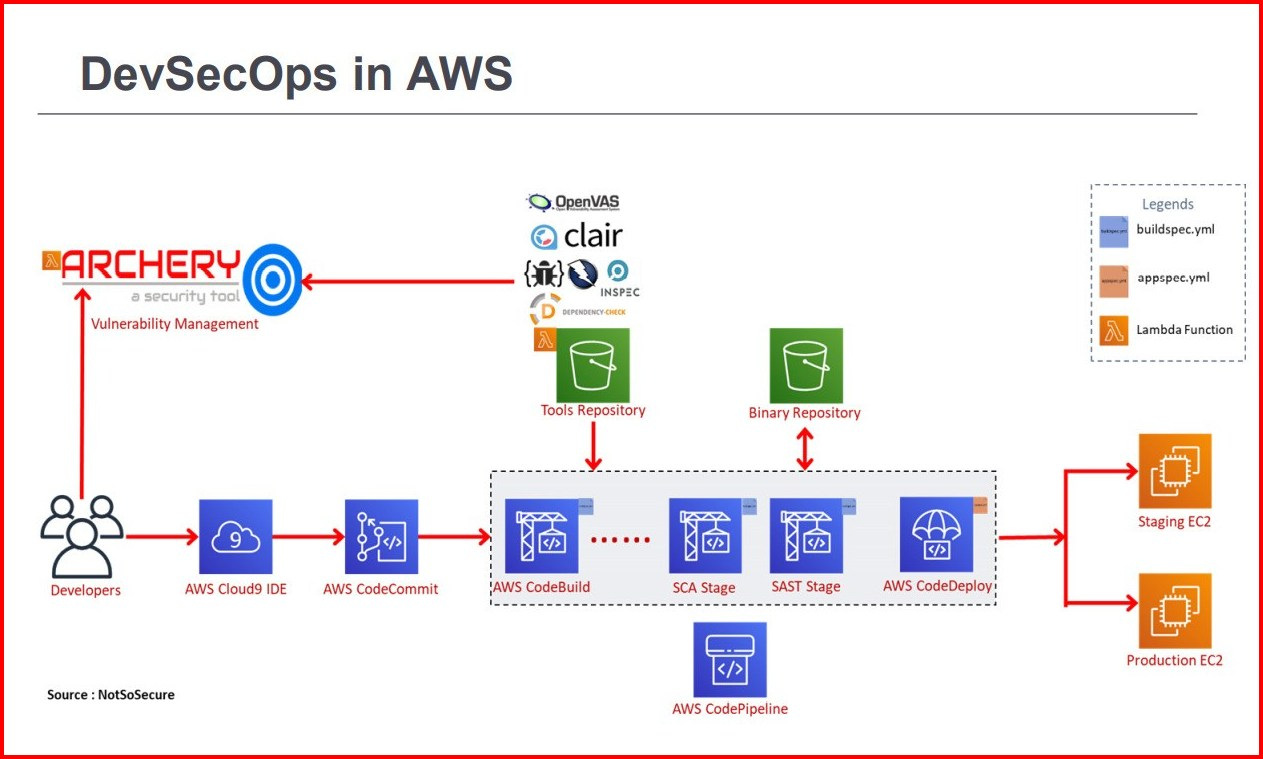

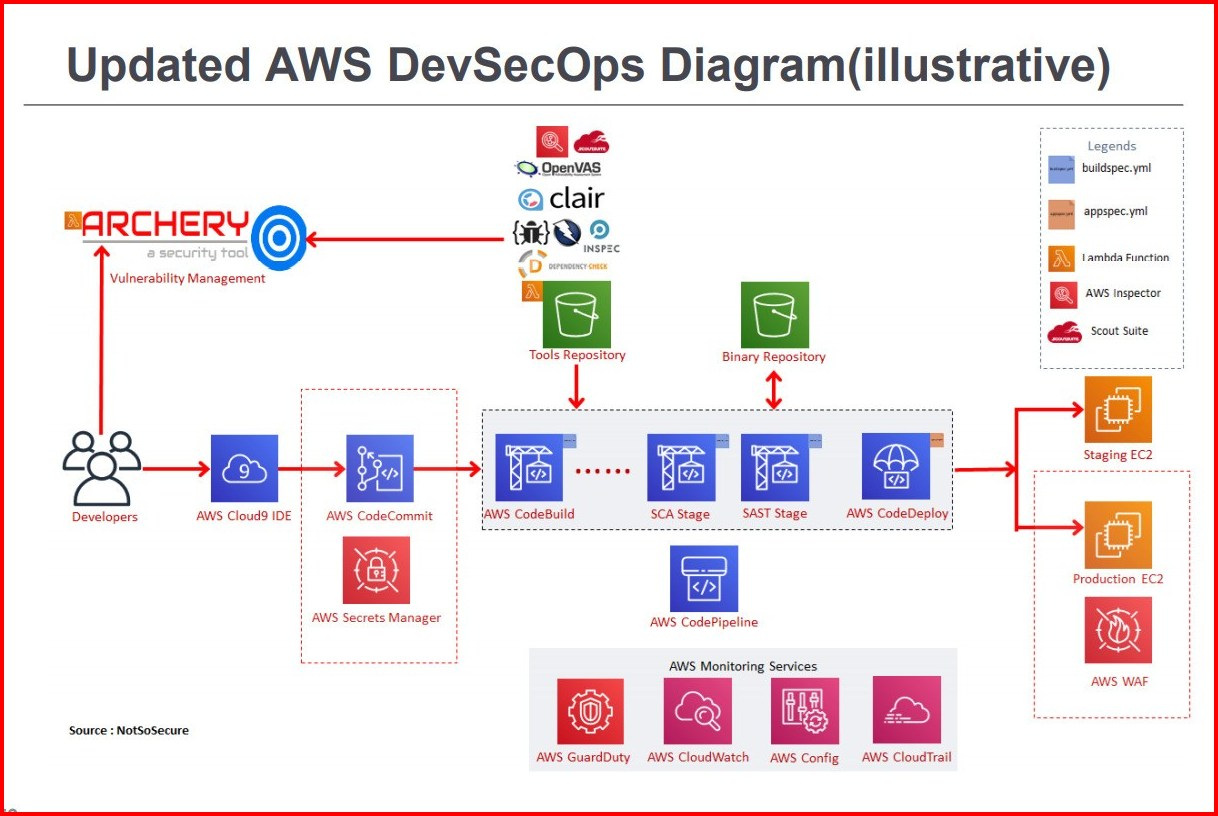

By Day 3 we were almost done with the on premise DevSecOps pipeline. Then the day 4 arrived, the final day of the training. We started with DevSecOps in AWS. We learnt how in place of Jenkins pipeline, we use AWS CodeBuild stages are used. Tools have API access and are scriptable. These tools can be converted to lambda functions, and each tool can be deployed in a Amazon S3 bucket to store the binaries for tools.

We saw the basic pipeline in AWS, we analyzed the Threat Landscape in AWS.

- AWS Access Control, S3 Buckets leak, Excess Privileges, Permissions Review, etc.

- After which we see a updated Diagram that covers most of the threats, and this is how DevSecOps can be implemented in the AWS.

The trainer also compared between DevSecOps between the major cloud providers, and several parameters such as, SCM, IaC, Firewall, Threat Detection, etc. to name a few, to make it easy for cloud security providers to do their job.

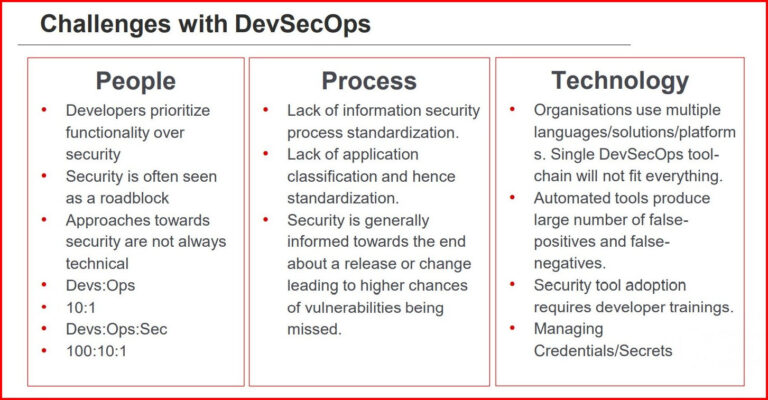

Towards the end of the training, the trainer addressed the challenges faced in DevSecOps, and how DevSecOps is not just enough.There are issues/vulnerabilities that these scanner/tools find difficult to detect like – Business Logic Flaws, Mass Assignment Vulnerability, Parameter Tampering, Access Control Flaws, Authorization Flaws, etc.

Challenges in DevSecOps

We learnt how do we overcome these challenges? How do we do DevSecOps? And I would like to share my learnings from this section, as I believe it very much essential for everyone to understand.

So my learning from this section are – building a culture around security, addressing that not everything can be automated, by encouraging the security mindset in other teams as well and embracing security as everyone’s responsibility.

DevSecOps is not one size fits all thing. Every organization and every technology pipeline is different. DevSecOps is a process and everyone around it is responsible for the product of the pipeline, hence security mindset is very much required.

And in the end we analyzed different case studies and also analyzed different pipelines for different programming languages and also learnt how to begin with DevSecOps.

And with this, the DevSecOps training came to an end. It was such an amazing experience, learning with such awesome people and with such great mentors was really a great opportunity and with this my job as the moderator also came to an end. It was a great experience altogether, with around 50 participants, 3 awesome mentors who delivered awesome training and provided the participants with Offline labs to practice with was a cherry on top.

Post Training Screenshot

Thank You 🙂

To know about other trainings: